Adapting Text Augmentation to Industry problems

In this post, I will talk about the recent advances in exploiting language models for data generation and also show how, where we can implement them in Industry.

Context

The problem with Data Augmentation in NLP is that language is position sensitive i.e any change in the word order will change its syntax thereby affecting its semantics. Despite the stringent constraints in language, Data Augmentation has picked up significant pace in the recent past.

Twitter NLP community has woken up with the paper Easy Data Augmentation, followed by a popular blog on data augmentation techniques. The basic ones include lexical substitution, robustness through spellings, etc. Exploiting-Language-Models is newly added to the catalogue. This post focusses exclusively on the latter-part.

Use-case scenarios

For this post, I would like to consider two Industrial tasks where we can use augmentation:

-

Categorizing complaints/survey-responses into specific categories. (Classification task)

Let’s say that you are a Computer Tech-Giant like HP/Microsoft and want to process your incoming messages into clear buckets like Hardware, Customer Care, Software, General Support, Tech Support, Refund etc.

-

Redacting sensitive information from the data. (Sequence Labeling task)

Let’s consider that you are an independent survey firm who’s documenting the statistics of COVID-19. In this survey, to respect the privacy of individuals involved and to avoid targetted harassment by the public, few statements must be stripped of the key details. For example:

In Boston Street #4, Infected indivuals were two Asian students, of age 22 and 25 years old.

Leveraging Language models

This section will talk about the new techniques and also shows how they can be adapted to the use-cases above.

1. Vanilla method

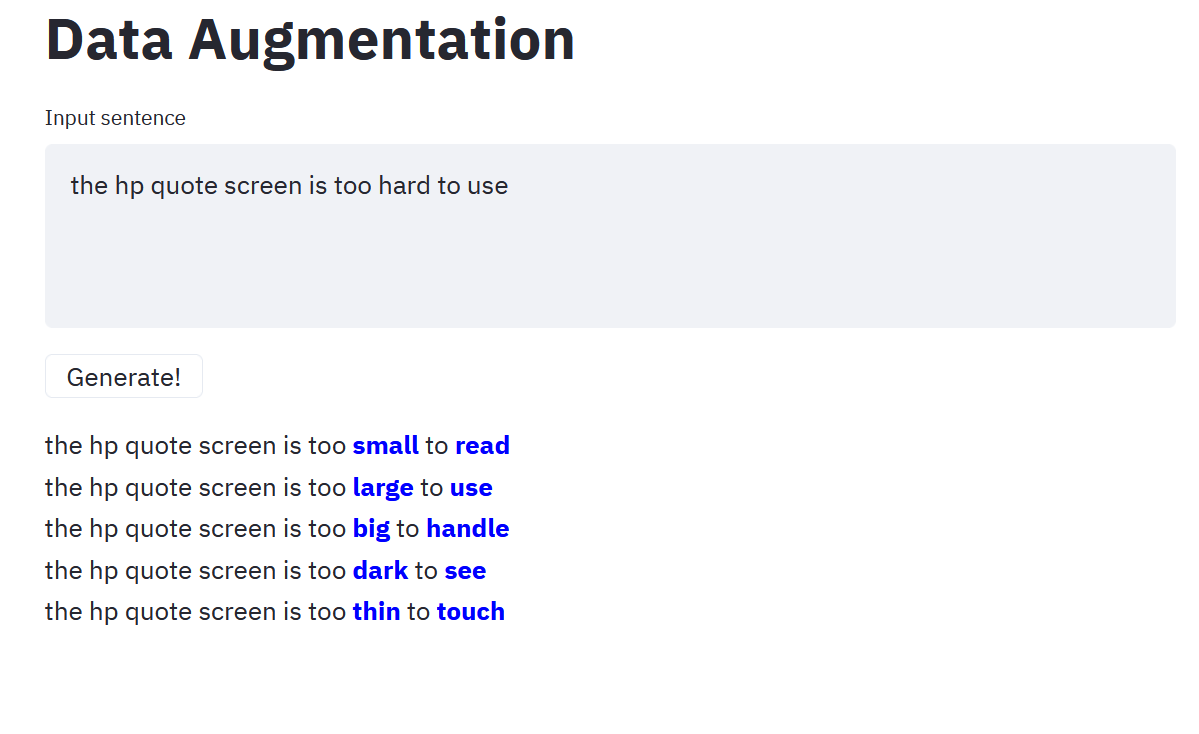

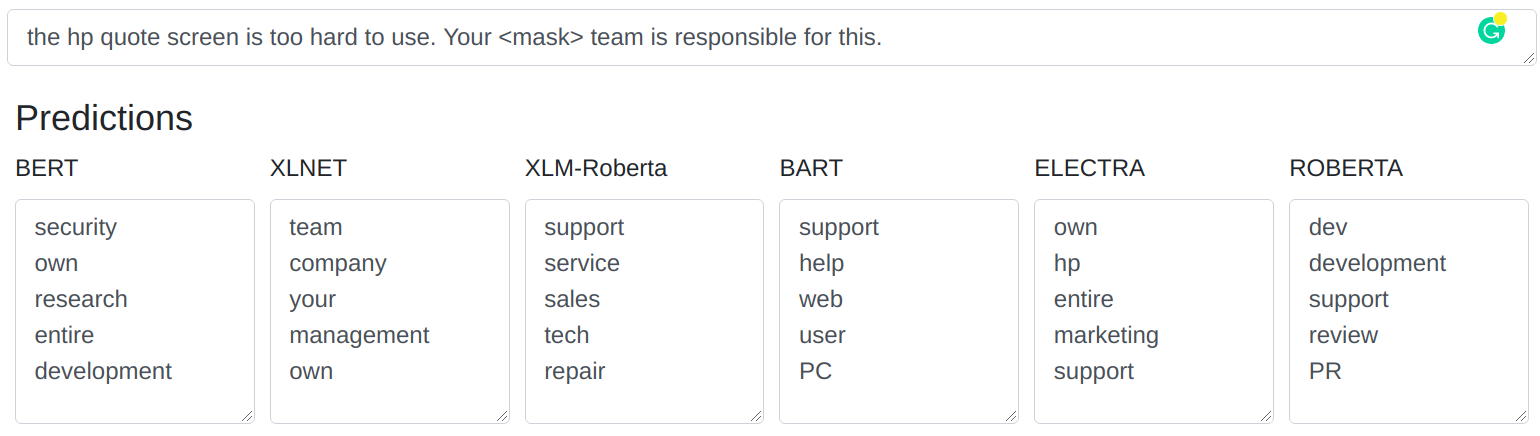

This is as simple as asking BERT/RoBERTa etc to fill-in the <mask> entries. This way, we can generate synthetic variants of the given sentence.

Info: In the image below, the app will mask random words (if not masked already) and predicts the <mask>.

1.1 Applying to the use-case

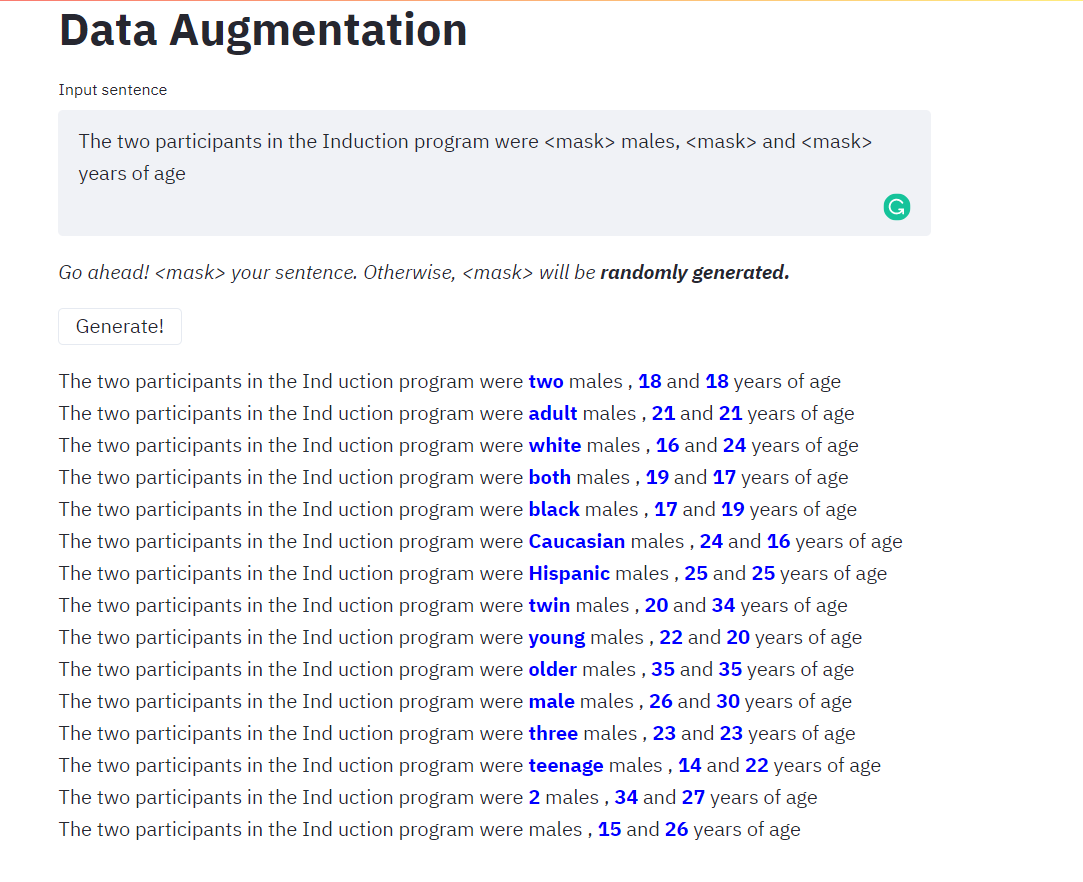

This vanilla method can be applied directly to usecase #2 i.e Redaction. Let’s consider a sentence for this purpose.

The two participants in the Induction program were white males, 6 and 9 years of age.

Here, we can generate multiple versions for race and age. Let’s mask these occurences and make the Language-models predict them. These generated variants can address the entity-level imbalance issue.

In the previous method, I comfortably ignored the fact that there can be class shiftors as well i.e newly predicted words can change the category of the sentence. For example, we want “white” word to be replaced by only “races” like Caucasian, Hispanic but we can see that there are other words which doesn’t necessarily represent “race”. This is as good as noise.

This is handled in Section 3.1.

2. Pattern-Exploiting Training (PET):

2.1 Intro:

PET is a euphemism for the paper Exploiting Cloze Questions for Few Shot Text Classification and Natural Language Inference. In this paper, authors set their objective to assign soft-labels to the unlabeled data using language-models and thereby creating larger datasets for improved supervised learning.

To achieve soft-labels that are relevant to the task, authors suggest reformulating the input sentence to help the language model in identifying the target task.

For example, let’s take a review:

Awesome drink specials during happy hour. Fantastic wings that are crispy and delicious, wing night on Tuesday and Thursday!

In order to tag the above sentence by exploiting LMs, authors suggest introducing supporting phrases/templates. For the above example, we can use

All in all, it was a

<mask>experience

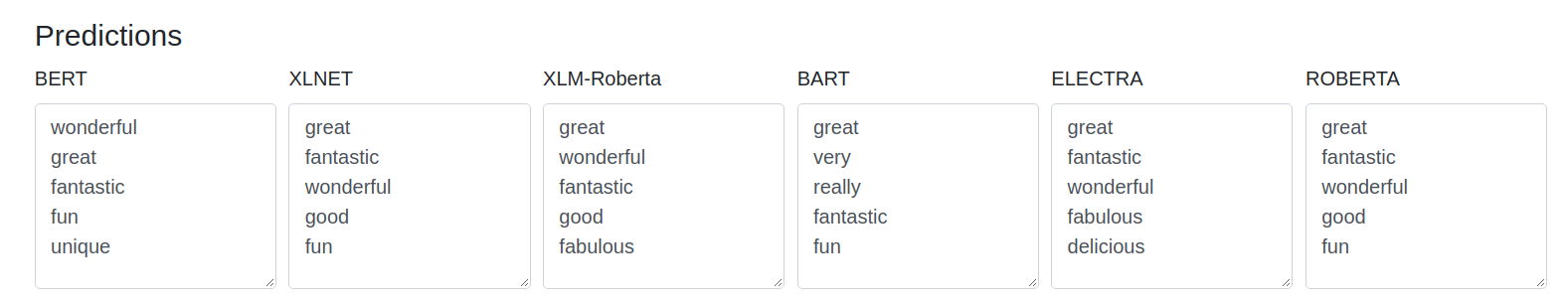

as a supporting phrase and make the LMs predict the mask. After appending this template sentence to our review, let us review what does the LMs predict now:

Awesome drink specials during happy hour. Fantastic wings that are crispy and delicious, wing night on Tuesday and Thursday! All in all, it was a

<mask>experience

We can observe that words like wonderful, great, fantastic in Figure 3 are suggesting that the review is a +ve one.

2.2 Narrowing down the search space

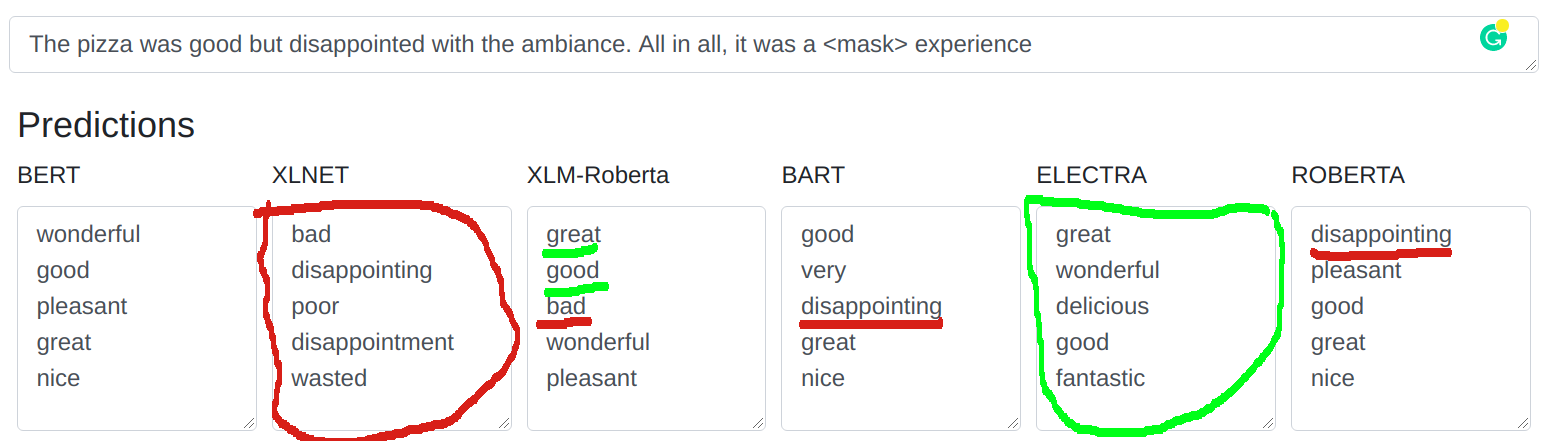

Let’s consider another review,

Pizza was good but disappointed with the ambiance.

When you want to build a +ve/-ve classifier, these kind of sentences fall in the gray area. The review can be counted as +ve if we are considering Taste and -ve if we are considering Ambiance. This is where you need to put in your human-bias as to let the models know what you want. Ambiance/Taste.

If Taste is the criterion you want to consider, then predicted words like bad, disappointing (red) need to replaced with positive words (green). So, you can fine-tune your LMs to predict the words of your choice. More details like, how mapping multiple words to a class-label is done, is out of scope for this post. Finetuning to words of your choice will help us narrow down the search-space of newly predicted words to that of the domain.

This method can be applied to Usecase #1 i.e Categorizing complaints

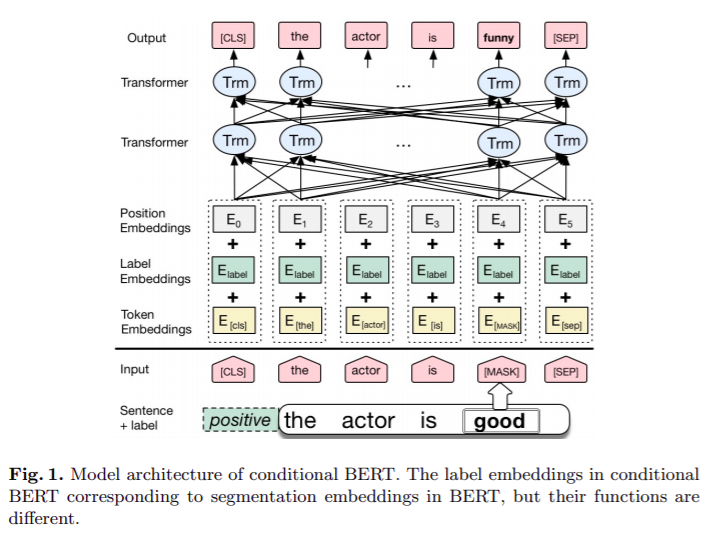

3. Conditioning your input:

Another way to handle class shiftors is to tell your LMs what you want extrinsically. Conditional-BERT authors approached this by prepending the desired class-label as an input.

Varun Kumar et.al improved this method by including Auto-Regressive Language-Models like GPT-2 & Seq-Seq Language Models like BART.

Nevertheless, conditional-input method must be changed slightly to adapt to the Use-case #2 i.e Redaction/NER. If you want a particular masked word to be “race”, add “race” at its position in the label-embeddings-layer to your input. Same goes for other entities like age, country etc.

Conclusion

In this post, we have gone through the Papers like PET, C-BERT and others to discuss the latest methods used in exploiting language models for Data-Augmentation. We also discussed how we can adapt these to Industry problems.

References:

- Varun Kumar et.al -> Data Augmentation using Pre-trained Transformer Models

- Xing Wu et.al -> Conditional BERT Contextual Augmentation

- Jason Wei, Kai Zou -> Easy Data Augmentation

- Marco Tulio Ribeiro et.al -> Checklist Paper and repo

- Renato Voilin -> next-word prediction repo

- M. Manohar -> freemiya nlp-helper repo

Appendix

Libraries like nlpaug, eda_nlp, TextAttack are already offering most of these methods and Checklist, TextAttack are officially using contextual models for generating test-cases/adverse-attacks.

Leave a comment